Data tables

This page is part of our section on Persistent storage & databases which covers where to effectively store and manage the data manipulated by Windmill. Check that page for more options on data storage.

Windmill Data Tables let you store and query relational data with near-zero setup using databases managed automatically by Windmill. They provide a simple, safe, workspace-scoped way to leverage SQL inside your workflows without exposing credentials. Data tables can also be used from full-code apps via SQL backend runnables and from the Database Studio app component for browsing and editing data visually.

Data tables are queryable as a typed schema from TypeScript, Python and DuckDB clients, and can be browsed, edited and used as a Postgres trigger source without ever exposing the underlying connection string to users.

Getting started

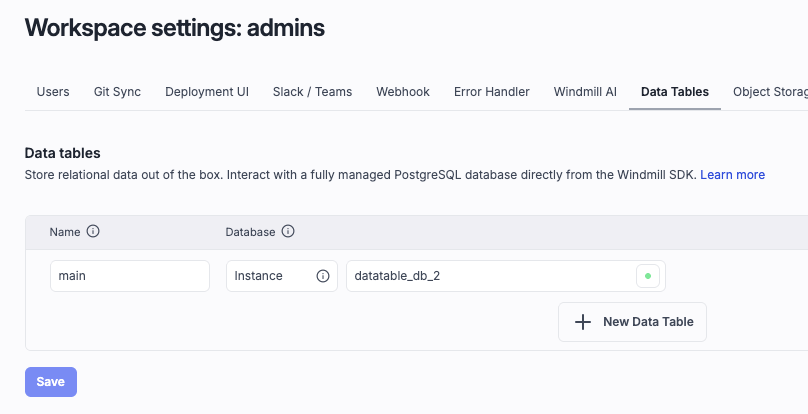

Go to workspace settings -> Data Tables and configure a Data Table :

Superadmins can use a Custom instance database and get started with no setup.

Usage

- Typescript

- Python

- DuckDB

import * as wmill from 'windmill-client';

export async function main(user_id: string) {

// let sql = wmill.datatable('named_datatable');

let sql = wmill.datatable();

// This string interpolation syntax is safe

// and is transformed into a parameterized query

let friend = await sql`SELECT * FROM friend WHERE id = ${user_id}`.fetchOne();

// let allFriends = await sql`INSERT INTO friend VALUES ('John', 21)`.fetch();

return friend;

}

import wmill

def main(user_id: str):

# db = wmill.datatable('named_datatable')

db = wmill.datatable()

# Postgres scripts use positional arguments

friend = db.query('SELECT * FROM friend WHERE id = $1', user_id).fetch_one()

# all_friends = db.query('SELECT * FROM friend').fetch()

return friend

-- $user_id (bigint)

-- ATTACH 'datatable://named_datatable' AS dt;

ATTACH 'datatable' AS dt;

USE dt;

SELECT * FROM friend WHERE id = $user_id;

We recommend to only have one or a few data tables per workspace, and to use schemas to organize your data.

You can reference schemas normally with the schema.table syntax, or set the default search path with this syntax (Python / Typescript) :

sql = wmill.datatable(':myschema'); // or 'named_datatable:myschema'

sql`SELECT * FROM mytable`; // refers to myschema.mytable

Query methods

Every sql`...`, sql.query(...) or db.query(...) call returns a statement object with four execution methods:

fetch()— all rows from the last statement (default)fetchOne()/fetch_one()— first row only, ornull/Noneif emptyfetchOneScalar()/fetch_one_scalar()— first column of the first rowexecute()— run the query and return nothing

For multi-statement queries or scalar-only results, pass a result collection mode to fetch:

- Typescript

- Python

await sql`...`.fetch({ resultCollection: 'all_statements_all_rows' });

db.query('...').fetch(result_collection='all_statements_all_rows')

Available modes: last_statement_all_rows (default), last_statement_first_row, last_statement_all_rows_scalar, last_statement_first_row_scalar, all_statements_all_rows, all_statements_first_row, all_statements_all_rows_scalar, all_statements_first_row_scalar.

TypeScript also supports sql.query(sql, ...params) as an alternative to tagged-template syntax, matching the Python API:

await sql.query('SELECT * FROM friend WHERE id = $1', user_id).fetchOne();

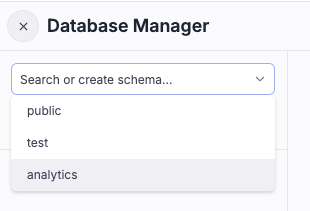

Schemas

Data tables support Postgres schemas to organize tables into logical groupings within a single data table. You can:

- Create, rename and drop schemas from the workspace settings Data Tables UI.

- Reference tables with the explicit

schema.tablesyntax, or switch the default search path from the client by appending:myschemaafter the data table name (wmill.datatable('named_datatable:myschema')orwmill.datatable(':myschema')for the default data table). - Use schemas as an organizational boundary without needing multiple data tables — we recommend keeping the number of data tables per workspace small and using schemas for separation.

Type-checked queries

The Windmill editor understands your data table's schema and type-checks queries at edit time for TypeScript, Python and DuckDB scripts. The introspected schema (tables, columns, types) is surfaced in the editor's SQL IntelliSense, and invalid columns or type mismatches are flagged before the script ever runs.

String-interpolated parameters (e.g. sql`SELECT * FROM friend WHERE id = ${user_id}`) are always transformed into safe parameterized queries, so type checking covers both the SQL itself and the bindings.

sql.raw for dynamic SQL fragments

Use sql.raw(value) to inline a string directly into the SQL query without parameterization. This is useful for dynamic table or column names that cannot be bound as parameters:

const sql = wmill.datatable();

await sql`SELECT * FROM ${sql.raw(table)} WHERE age > ${age}`.fetch();

// Produces: SELECT * FROM users WHERE age > $1::BIGINT

sql.raw is available on both wmill.datatable() and wmill.ducklake(). Since the value is inlined verbatim, ensure it comes from trusted input to avoid SQL injection.

When a data table schema changes, the editor re-introspects it so downstream scripts immediately see the new columns and types without a manual refresh.

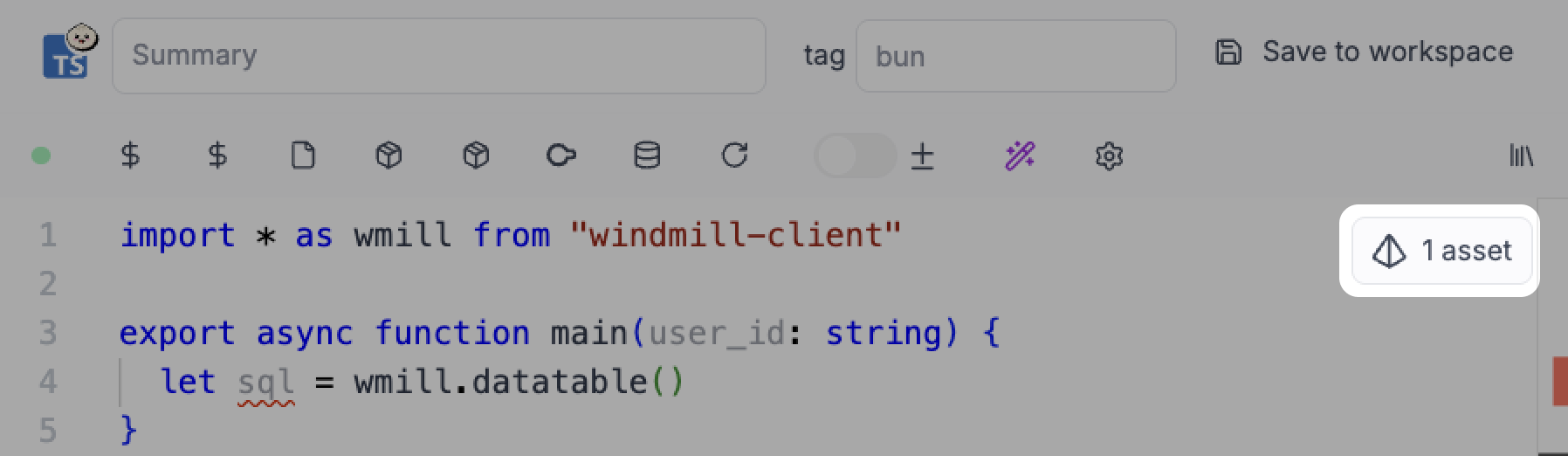

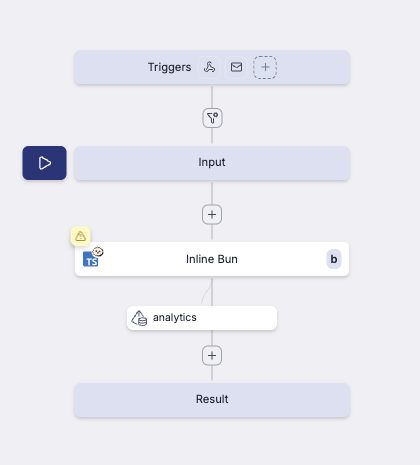

Assets integration

Data tables are assets in Windmill. When you reference a data table in a script, Windmill automatically parses the code and detects them. You can then click on it and explore the data table in the Database Explorer.

Windmill auto detects if the data table was used in Read (SELECT ... FROM) or Write mode (UPDATE, DELETE ...). Assets are displayed as asset nodes in flows, making it easy to visualize data dependencies between scripts.

Database Studio integration

Data tables are a first-class source in the Database Studio app component. Select Data Table as the component's Type, then use the built-in table picker to choose from the workspace's configured data tables — no resource wiring required.

From there you get the full Database Studio experience (browse rows, inline edit, create/alter tables, schema explorer) on top of your data tables, without exposing the underlying credentials to the app builder or end users.

Use as a Postgres resource and trigger source

A data table that is backed by a Postgres resource (either the custom instance database or a workspace-managed Postgres resource) can be used anywhere a Postgres resource is expected — most notably as the source of a Postgres trigger. This lets you react to inserts, updates and deletes on tables managed by a data table without handing out the Postgres credentials.

Workspace-scoped

Data tables are scoped to a workspace. All members of the workspace can access its data tables. Credentials are managed internally by Windmill and are never exposed to users.

Special data table: main

The data table named main is the default data table. Scripts can access it without specifying its name.

Example:

# Uses the 'main' data table implicitly

wmill.datatable()

Database types

Windmill currently supports two backend database types for Data Tables:

1. Custom instance database

- Uses the Windmill instance database.

- Zero-setup, one-click provisioning.

- Requires superadmin to configure.

- Although the database exists at the instance level, it is only accessible to workspaces that define a data table pointing to it.

- See Custom instance database for more details.

2. Postgres resource

- Attach a workspace Postgres resource to the data table.

- Ideal when you want full control over database hosting, but still benefit from Windmill's credential management and workspace scoping.

Permissions

Currently, Windmill does not enforce database-level permissions in data tables.

- Any workspace member can execute full CRUD operations.

- Table/row-level permissions may be introduced in a later version.

Windmill ensures secure access by handling database credentials internally.

Use on agent workers

wmill.datatable() and wmill.ducklake() work on agent workers out of the box, from both TypeScript and Python. Because agent workers do not run the embedded internal Windmill server, the clients automatically detect the execution environment and fall back from the inline preview path to a run-and-wait flow against the main Windmill API, so scripts that use data tables remain portable between regular and agent workers with no code changes.